Enhancing System Reliability via Multi-Version Machine Learning and Rejuvenation ― A Case Study on Multi-Version Perception with Time-Triggered Rejuvenation in an AV Simulator

2026 03.31 08:15

研究者情報

Wen Qiang (指導教員: 町田文雄)

目次

Abstract

Background

Recent advances in Machine Learning (ML) have revolutionized various application domains, including text, speech, image, video processing, control, and even the arts. ML has also been widely adopted in safety-critical applications such as autonomous driving and healthcare. In autonomous vehicles, perception systems play a crucial role by identifying roads, traffic signs, vehicles, and pedestrians to create an accurate map of the environment, enabling safe navigation.

However, ML-based perception systems are inherently uncertain due to their probabilistic decision-making nature. Additionally, they are vulnerable to transient faults (e.g., bit flips in weight matrices) and malicious cyber-attacks (e.g., adversarial inputs), which can lead to misclassifications with severe consequences, including accidents and loss of life. Ensuring the reliability of these systems remains a significant challenge.

Proposed method

N-version programming (NVP) is a promising approach to enhancing reliability by introducing diversity in failure characteristics. This method enables a healthy majority to outvote faulty or compromised modules, improving system safety. While previous studies have explored the benefits of NVP in ML-based perception systems, they primarily focus on mitigating classification errors rather than addressing adversarial attacks or transient faults. Moreover, NVP alone provides initial diversity but fails to maintain long-term resilience against persistent adversaries.

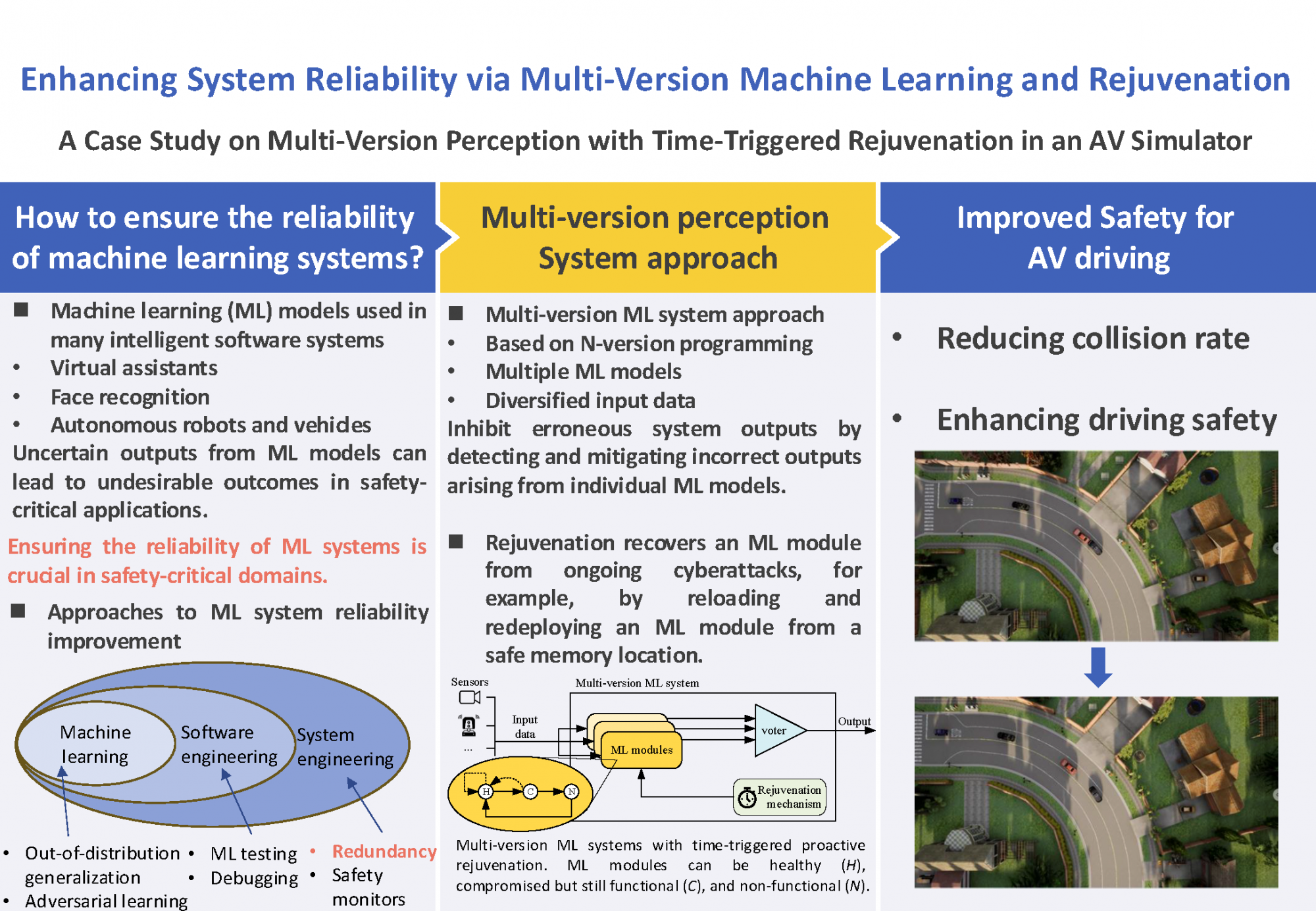

To address these limitations, we propose a novel architecture that integrates multi-version ML with reactive and proactive rejuvenation to enhance the reliability of safety-critical ML systems. Our approach defines decision rules to improve fault tolerance, supporting both full fault masking and degraded reliability modes when multiple ML modules fail. Fig. 1 illustrates our architecture and its components.

Multiple different sensors feed into the ML modules, which internally may select a subset of these inputs or be prepared to detect and sideline faulty sensors. ML modules can execute a combination of NNs and other decision modules to perform the classification and prediction task at hand. For example, for autonomous driving, an ML module may include NNs for vehicle, passenger and obstacle detection, traffic sign detection, face-based intention prediction (e.g., to learn if pedestrians wish to cross the road), and they may invoke them in response to prior NN outputs (e.g., dependent on the number of pedestrians detected).

In this work, we focus on modeling rejuvenation and on time-triggered proactive rejuvenation, which also rejuvenates a module while it is still healthy, to avoid missing compromised modules in case detection fails. In particular, rejuvenation recovers an ML module from ongoing cyberattacks, for example, by reloading and redeploying an ML module from a safe memory location. Although an ML module cannot process sensor data while rejuvenating, the entire system may profit from embracing a proactive rejuvenation mechanism (in addition to a reactive one for recovering non-functional modules from N->H). Such a mechanism can recover the total capacity of an ML module and mitigate error probability dependency between modules by reducing prolonged exposure to potential faults. Fig. 1: Multi-version ML systems with time-triggered proactive rejuvenation. ML modules can be healthy (H), compromised (C), but still functional, or failed (F) and non-operational.

Fig. 1: Multi-version ML systems with time-triggered proactive rejuvenation. ML modules can be healthy (H), compromised (C), but still functional, or failed (F) and non-operational.

Experiment

To evaluate the practicality of our approach, we implemented a multi-version perception system with rejuvenation for AVs and conducted the analysis using the CARLA AV simulator. Specifically, we focus on assessing the impact of rejuvenation of a multi-version perception system for driving safety. We simulate a three-version perception system incorporating a time-triggered rejuvenation mechanism. Compromised ML models are generated using PyTorchFI, by introducing artificial faults into the ML models, which simulate the effects of an attack or transient fault. We then compare the AV driving behavior across eight scenarios with rejuvenation to those without rejuvenation to assess its impact on AV driving safety.

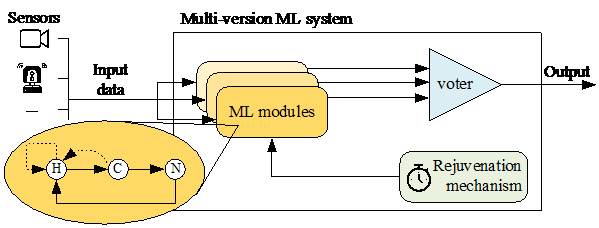

We utilize the CARLA AV simulator and the cooperative driving co-simulation framework, OpenCDA, to simulate various driving scenarios. In the OpenCDA simulation, each AV is equipped with sensors that capture data from both the surrounding environment and the ego vehicle, such as 3D LiDAR points and Global Navigation Satellite System (GNSS) data. This collected data is processed by the perception and localization systems, enabling the detection and localization of objects. The resulting perception and localization outputs are passed to the planning system, which uses this data to compute the AV’s trajectory, adjusting the vehicle’s acceleration, speed, and steering based on the generated path. The final trajectory and control instructions are then forwarded to the control system, which executes the necessary commands to control the vehicle’s movements. In this study, we focus on the perception system. We select eight routes, with two routes chosen from each of the maps Town02, Town03, Town04, and Town05 in CARLA, as shown in Fig. 2. The starting points of the routes are marked with ovals, while the endpoints are indicated by stars. During these simulation runs, the ego AV must navigate based on its perception system, which is responsible for accurately detecting other vehicles and road obstacles. This setup allows us to evaluate the performance of multi-version perception systems with time-triggered rejuvenation under diverse scenarios and road configurations. Fig. 2: Adopted maps and routes in Town02, Town03, Town04, and Town05 of CARLA simulator.

Fig. 2: Adopted maps and routes in Town02, Town03, Town04, and Town05 of CARLA simulator.

We employ YOLOv5 in the perception module for autonomous driving within CARLA. YOLOv5 is an advanced object detection framework that leverages a single-stage detection pipeline, directly predicting bounding boxes and class probabilities from images. Specifically, we deploy different variants of YOLOv5 — YOLOv5s6, YOLOv5m6, and YOLOv5l6 — to construct healthy models. The different variants enable us to simulate the multi-version perception frameworks. We then compromise these models with PyTorchFI, leveraging PyTorchFI’s runtime perturbation feature for weights and neurons in DNNs. This functionality is crucial for simulating real-world scenarios where models may encounter unexpected disruptions. Like above, we employed PyTorchFI’s random_weight_inj function with a weight range of (-100, 300) to mimic the conditions compromised models encounter before assessing the multi-version perception system under these perturbed states.

Evaluation Metric: To compute driving safety, we measure the collision rate as the ratio of collision frames to the total frames. Additionally, we report the frame at which the first collision occurs and the total number of frames as part of our evaluation metrics. Each route is evaluated over five runs, both with and without rejuvenation, and the average values of the metrics, along with the total number of collisions observed across these runs, are recorded. These metrics offer an evaluation of the safety of the three-version perception system with rejuvenation in different scenarios, with a primary focus on vehicle-to-vehicle collision scenarios in this study.

Results

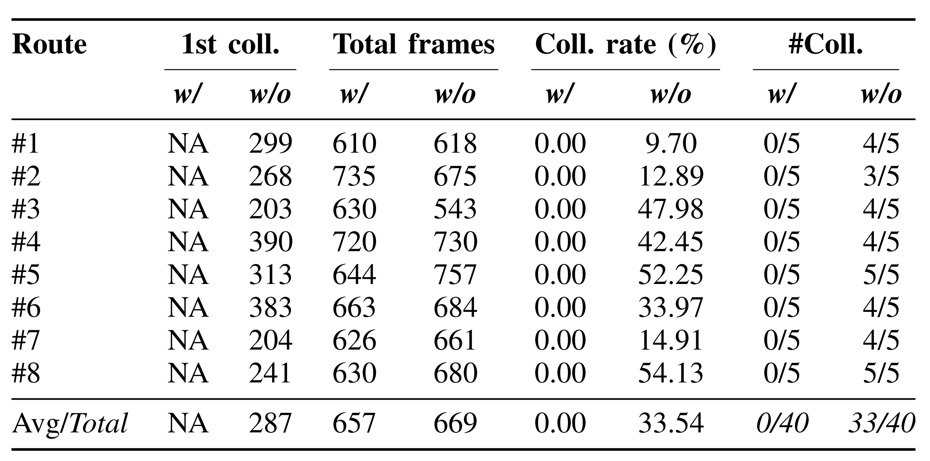

In our study, we investigate the effects of a time-triggered rejuvenation mechanism within multi-version perception systems implemented across eight distinct route scenarios for AVs. We compare collision data between systems with rejuvenation (w/) and those without (w/o) to assess driving safety. The experimental results, presented in Table 1, show that the system with rejuvenation consistently avoids collisions across all 40 runs in all tested routes, achieving a collision rate of 0%. In contrast, significant collision rates are observed without rejuvenation, ranging from 9.70% to 54.13% across different routes, with some scenarios recording collisions in all runs. On average, the collision rate without rejuvenation is 33.54%, with a standard deviation of 18.53%, and the first collision frame occurs on average at frame 287. These results demonstrate the effectiveness of the rejuvenation mechanism in enhancing driving safety within multi-version perception systems, mitigating the impact of compromised and non-functional ML models, and significantly reducing the risk of collisions.

Table 1: Collision data of the multi-version perception system w/ and w/o rejuvenation over different routes.

Conclusion

In conclusion, this study demonstrates that integrating multi-version machine learning with time-triggered proactive rejuvenation significantly enhances the reliability and safety of ML-based perception systems for autonomous vehicles. The experimental results reveal that the system employing rejuvenation achieved a 0% collision rate across all scenarios, in stark contrast to the non-rejuvenated system’s average collision rate of 33.54%. These findings underscore the effectiveness of rejuvenation in mitigating the adverse effects of compromised or faulty ML models. Moreover, the proposed approach provides a robust framework that can be extended to other safety-critical applications where reliability is paramount. Future research may explore real-world implementations and further optimizations to bridge the gap between simulation and practical deployment.

Afterword

Reflecting on the journey of this research, I recall the long nights of troubleshooting simulation errors and fine-tuning the rejuvenation mechanisms—a process both challenging and immensely rewarding. One of the creative approaches in this work was the combination of N-version programming with proactive rejuvenation, a method that required stepping outside conventional ML reliability techniques to embrace more comprehensive system reliability measures. Data collection in the simulated environment presented its own set of challenges, from ensuring the consistency of sensor inputs in CARLA to accurately simulating fault conditions with PyTorchFI. The sweat and persistence required in such projects not only enhance my technical skills but also build resilience—a quality indispensable in research.

Data Used

The artifact for this study is publicly available on GitHub at: https://github.com/QWen0116/Three-version-perception-with-rj.

The repository contains all the code, experimental configurations, and accompanying documentation required to reproduce the experiments conducted on the multi-version perception system with rejuvenation. For detailed installation and usage instructions, please refer to the README file included in the repository.

In addition, the related dataset is available at the following link:

https://data.mendeley.com/datasets/xzryjd5thf/1

Acknowledgment

This work was supported by JST SPRING Grant Number JPMJSP2124 and partly supported by JSPS KAKENHI Grant Number 22K17871. We would like to thank to Júlio Mendonça and Marcus Völp (Interdisciplinary Centre for Security, Reliability and Trust (SnT), University of Luxembourg) for their technical advice and collaboration research work on the joint publications.

References

Qiang Wen, Júlio Mendonça, Fumio Machida and Marcus Völp, “Multi-version Machine Learning and Rejuvenation for Resilient Perception in Safety-critical Systems”, In Proc. of the 55th Annual IEEE/IFIP International Conference on Dependable Systems and Networks (DSN), pp. 720-733, 2025.

Cases in the same field

-

Can One Deep-Learning Detector Find Trees Across Any LiDAR Platform?

Information-and-communicationEnvironmentNatural-scienceModelingPredictionFeature-extraction2026/03/25

-

Finding similar users based on the use of apps

ServiceClassification2021/07/16

-

The Potential for Transformation of Activities Within a Vehicle Produced by Autonomous Driving – Focusing on Time Value when Traveling

TrafficComparisonCorrelation2021/07/16