Overview

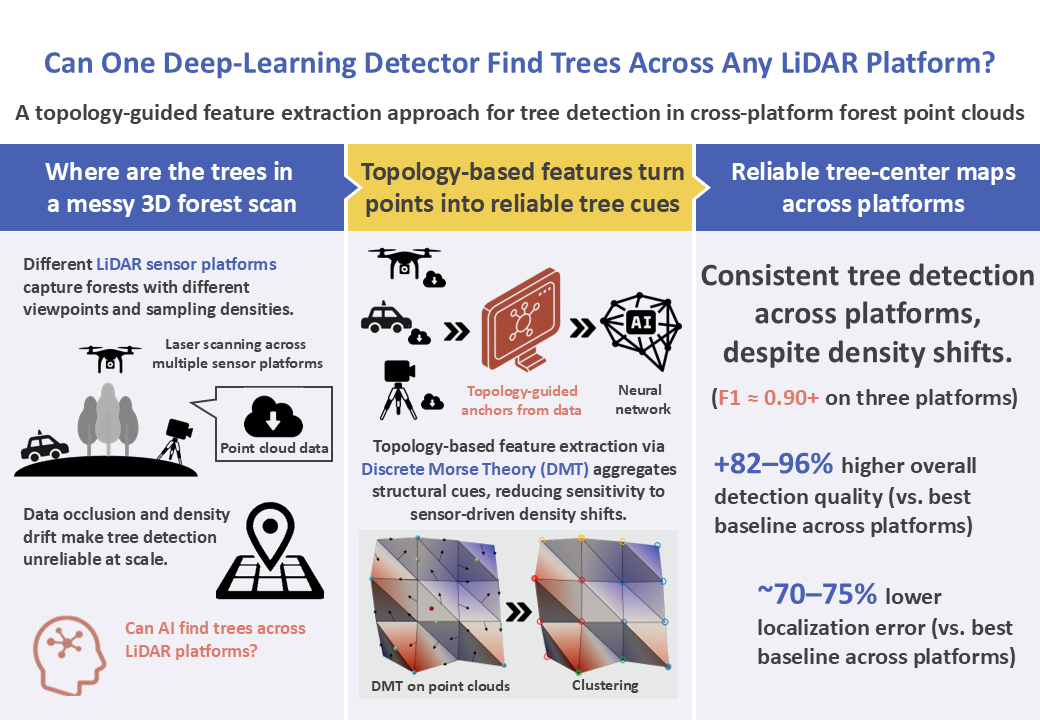

Research Background

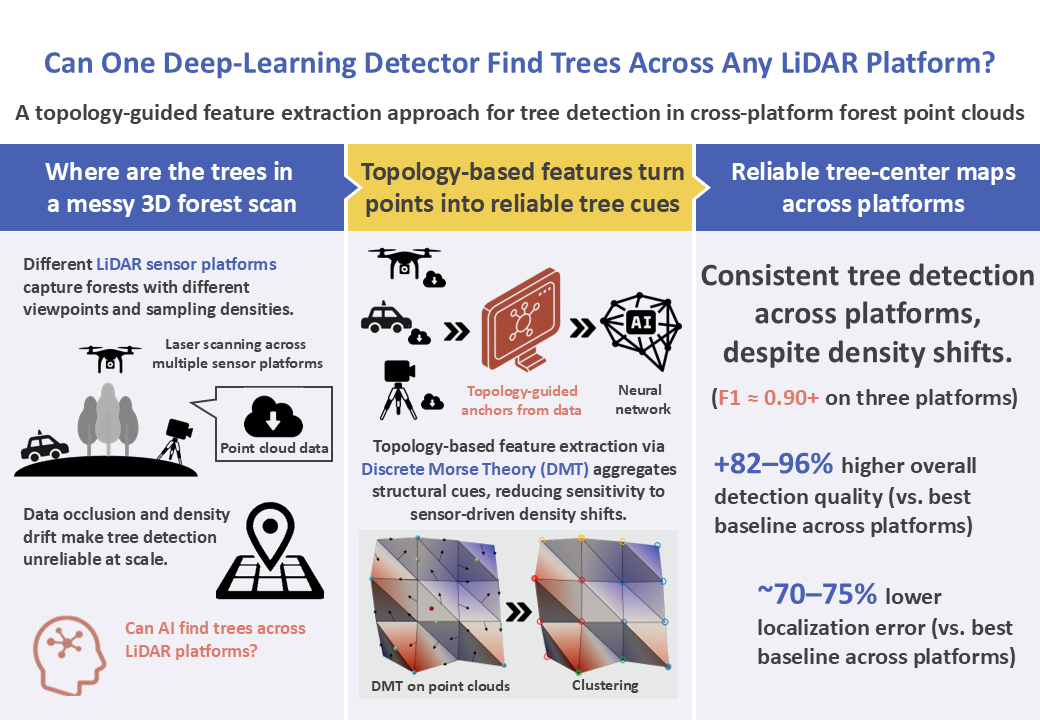

In recent years, LiDAR (Light Detection And Ranging) point clouds have become a key data source for forest monitoring because they capture three dimensional forest structure in a reliable and objective way. A basic but critical task is individual tree detection, where the goal is to estimate the ground plane location of each tree stem. Accurate tree locations support practical operations such as tree counting, mapping, and long term change monitoring.

However, detecting individual trees from point clouds remains difficult in real forests. Forest scenes contain heavy geometric clutter, frequent self occlusion, and overlapping crowns, which can hide stems and weaken the shape cues used by many detectors. In addition, forest surveys increasingly rely on multiple LiDAR platforms, such as terrestrial (TLS), mobile (MLS), and unmanned aerial (ULS) systems, each of which observes forests from a different viewpoint and produces different sampling densities.

Because of these platform differences, the same forest can appear very different in point clouds. This creates a practical gap: a detector that works well on one platform may degrade on another due to occlusion patterns and density drift, and methods that process points directly can become computationally expensive at large scale. Therefore, there is a strong need for a tree detection framework that is both robust across platforms and efficient enough for large forest scenes, without relying on platform specific heuristics.

Proposed Method

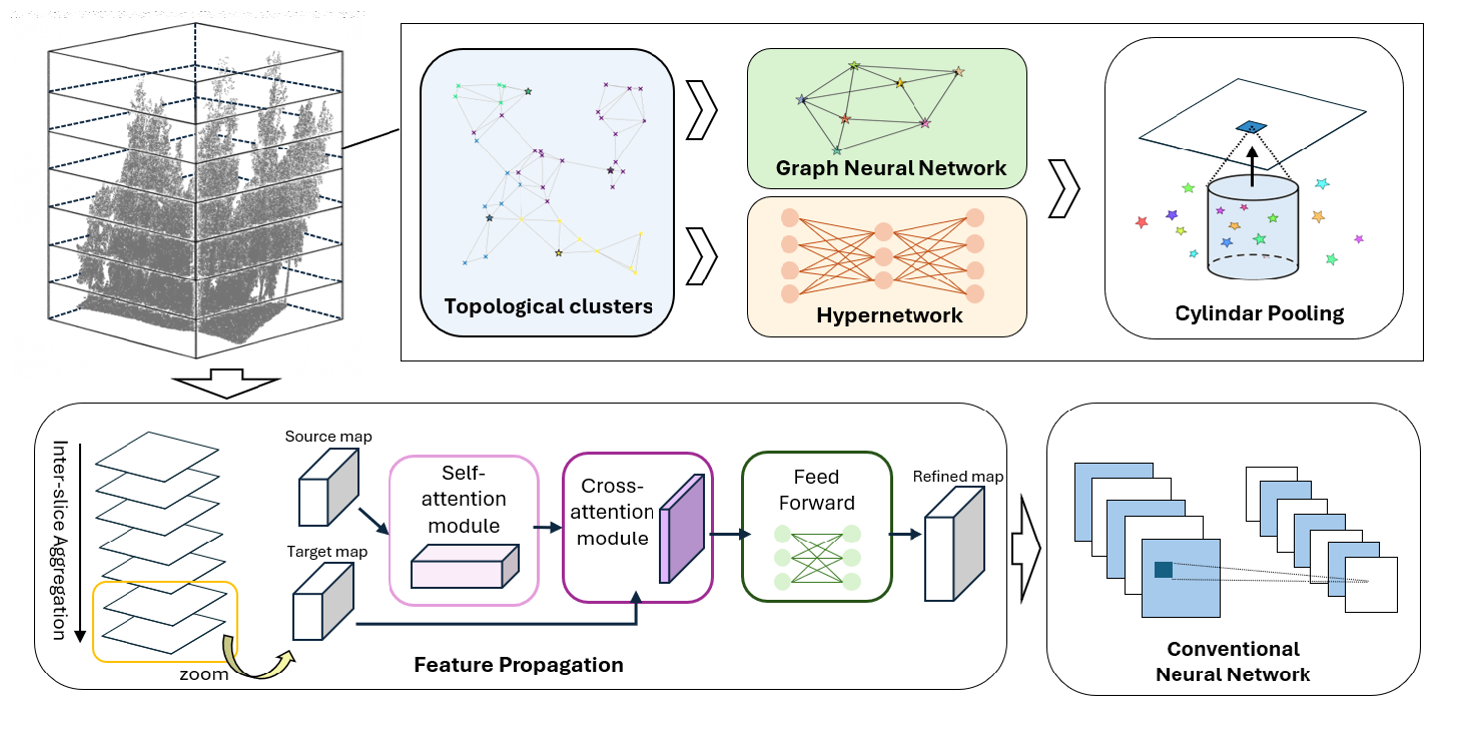

We explain the proposed tree-center detection framework in three stages.

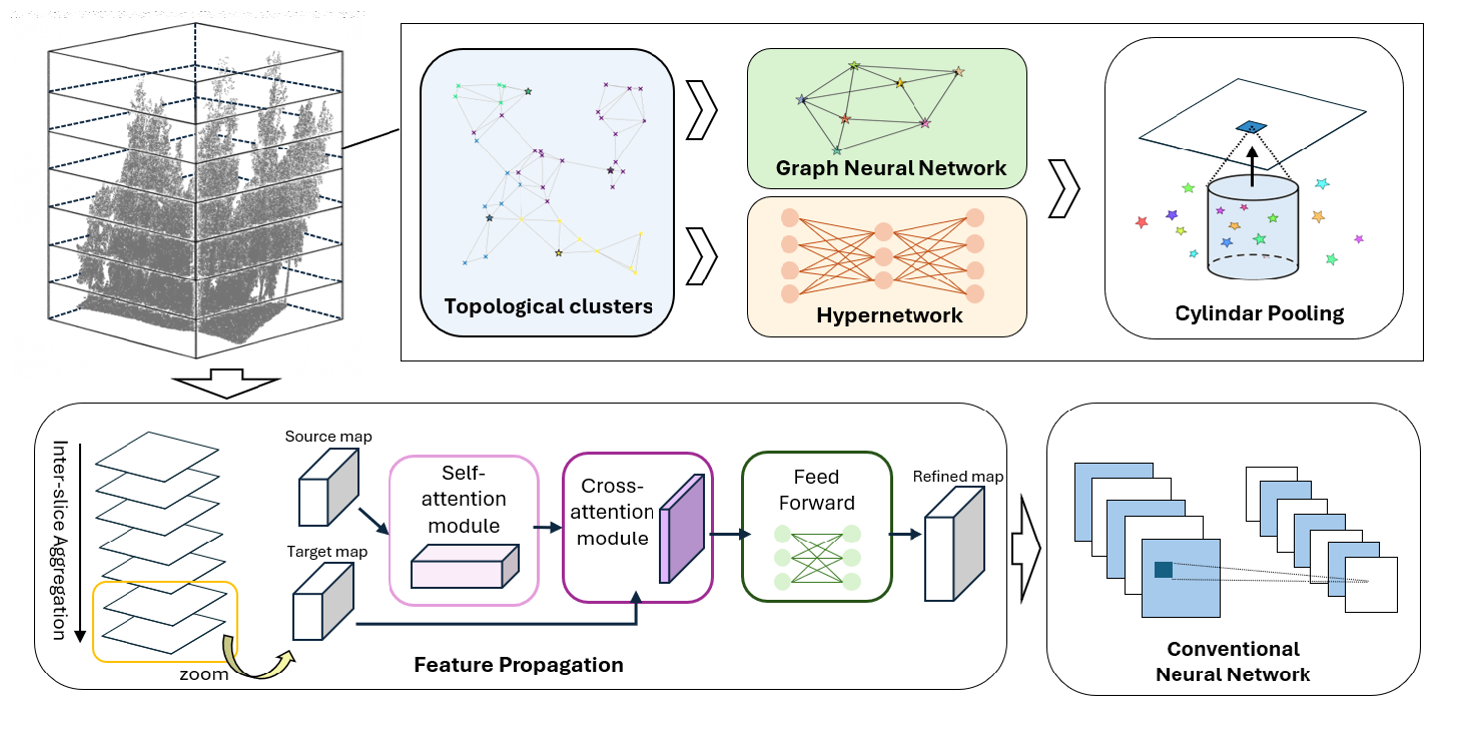

Fig. 1: Pipeline of the proposed framework (Reproduced from Fig. 1 in [1]).

- Make Points Comparable

We first prepare the data and use Discrete Morse Theory (DMT) to convert a raw point cloud into structure-based clusters and anchor points, which are more stable than density-based neighborhoods.

Input: forest LiDAR point cloud (TLS / MLS / ULS)

Terrain normalization: estimate ground surface and convert heights to height above ground

Height slicing: split the scene into thin vertical slices for stable, layer-wise processing

DMT-based clustering: in each slice, DMT groups points by following height-driven discrete flows and produces a representative anchor for each cluster

Output: per-slice structure-based clusters + anchors for the next stage

- Topology Features + AI

We learn compact features from the DMT anchors and project them onto a fixed 2D ground map.

Point encoding: use a small neural network (MLP: Multi-Layer Perceptron, a simple stack of linear layers) to embed each point into a feature vector

Anchor feature seeding: summarize member-point features inside each DMT cluster to form an anchor descriptor plus a few general geometry cues

Anchor context exchange: connect nearby anchors using kNN (k-Nearest Neighbors) and update them with a lightweight message-passing block

Local anchor–point update: refine features by aggregating information within each cluster neighborhood using an efficient attention-style pooling

Grid projection: pool anchor features onto a fixed-resolution 2D ground grid for each slice, producing per-slice feature maps

Output: a stack of 2D slice maps (one map per height slice)

- Predict Tree Locations

We fuse the slice maps across height and directly predict tree-center locations on the ground plane.

Slice fusion: merge the stacked 2D slice maps from top to bottom using an efficient attention-style fusion with a learnable gate for each height band

Detection head: predict (1) a tree-center confidence map and (2) a sub-cell offset map on the ground grid

Center decoding: convert high-confidence grid cells + offsets into final 2D stem-center coordinates

Output: tree-center locations for the entire plot

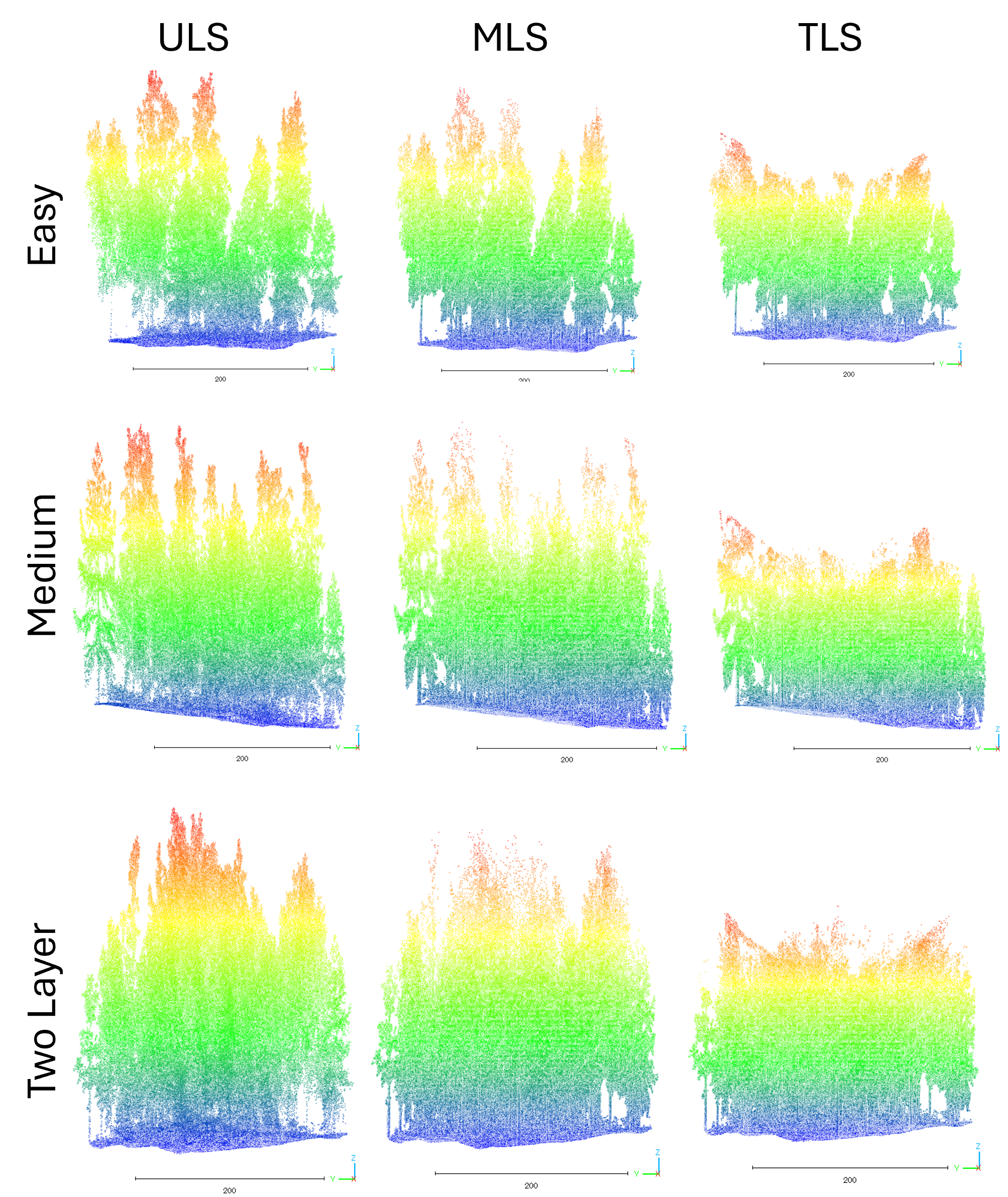

Data

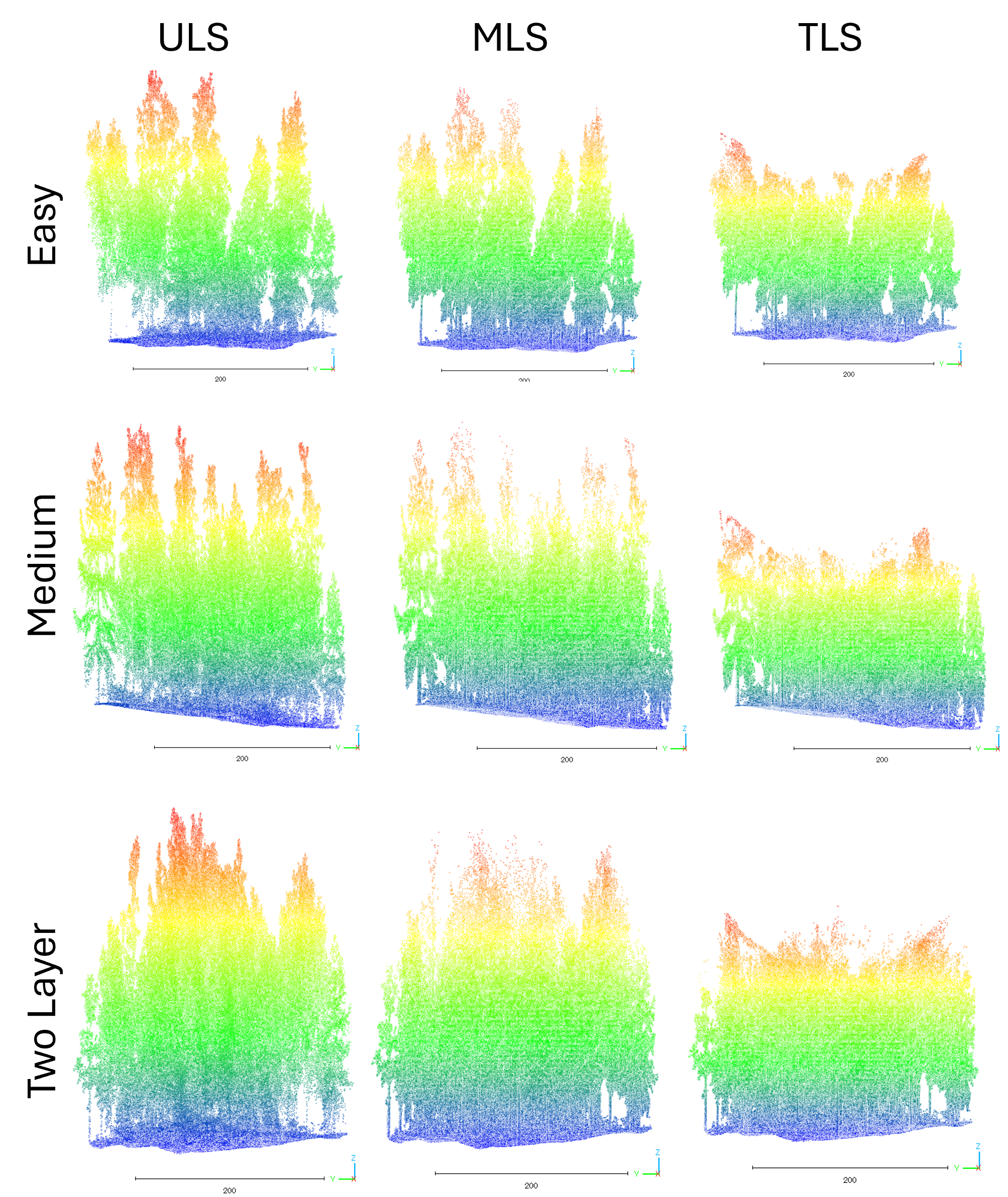

We use Boreal3D [2], a public synthetic benchmark of boreal mixed forests (spruce, pine, birch). For each forest plot, the dataset provides three platform-specific point clouds (TLS, MLS, and ULS) generated from the same underlying forest geometry. This design isolates platform effects (viewpoint, sampling density, and occlusion) from scene differences, making it suitable for evaluating cross-platform robustness. In total, the dataset contains 48,403 trees and includes plots of varying difficulty (Easy, Medium, TwoLayer). For training efficiency, each plot is further divided into three non-overlapping subplots.

Fig. 2:Sample plot from the Boreal3D dataset (Reproduced from Fig. 3 in [1]). Colors represent the z-coordinate before normalization of each point, ranging from purple (low) to red (high).

Results

Before reading the results in the tables below, we briefly explain the metrics.

F1 score measures how well the detector finds the right trees while avoiding wrong detections. In simple terms, it becomes high only when the method misses few true trees and also does not produce many false alarms (higher is better).

MRE (mean radial error) measures localization accuracy: after matching predicted tree centers to ground truth, it reports the average horizontal offset between them in meters (lower is better).

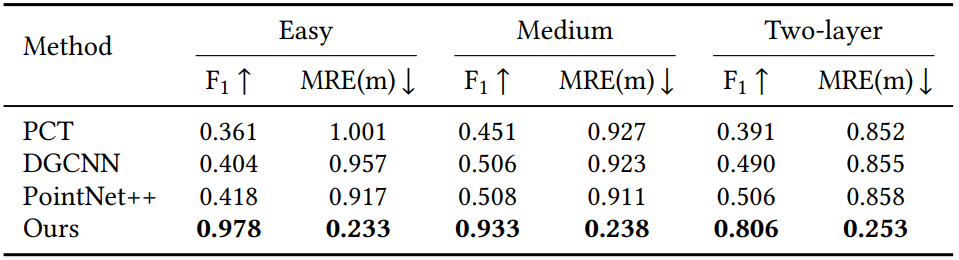

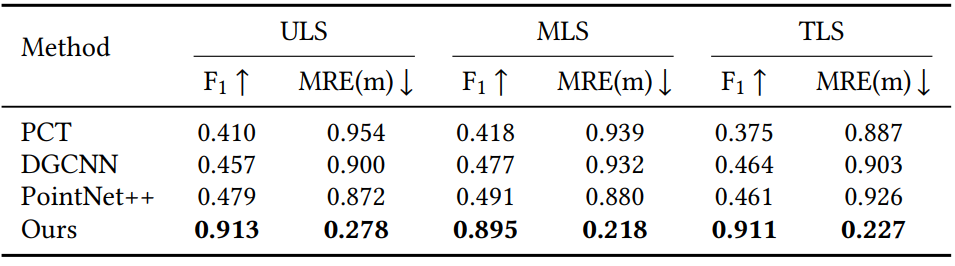

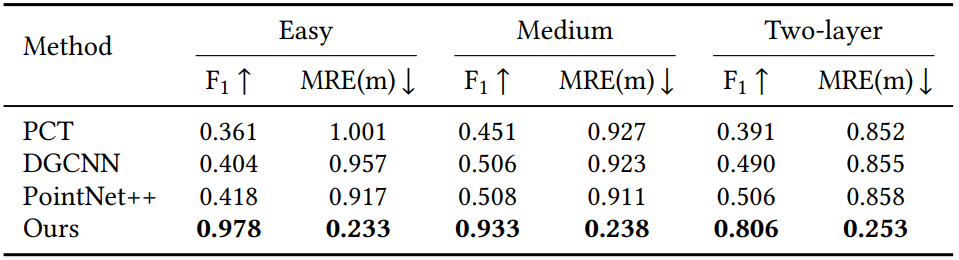

Platform-wise comparison (TLS / MLS / ULS)

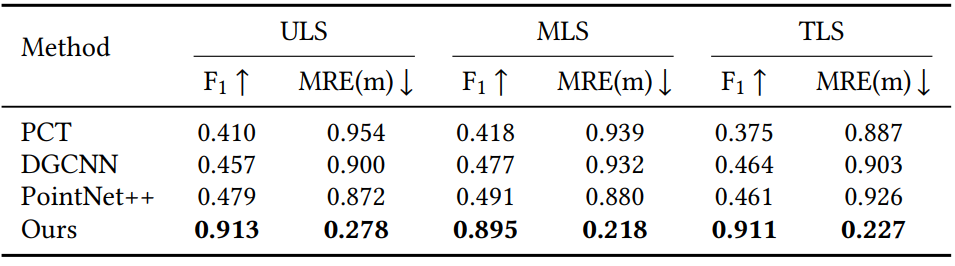

We evaluate whether one detector can consistently find tree centers across three LiDAR platforms with very different viewpoints and sampling densities.

– Our method is the most accurate on all three platforms, achieving F1 ≈ 0.90+ (ULS 0.913, MLS 0.895, TLS 0.911).

– At the same time, it produces much smaller location errors, with mean radial error (MRE) around 0.22–0.28 m (ULS 0.278 m, MLS 0.218 m, TLS 0.227 m).

– In contrast, point-based baselines (PCT [3] / DGCNN [4] / PointNet++ [5]) have low F1 (≈0.38–0.49) and large errors (≈0.87–0.95 m), showing that standard point-based neighborhoods become unreliable when platform sampling patterns shift.

Table 1. Platform-wise comparison (Reproduced from Table 2 in [1]).

Our detector stays reliable even when the platform changes, while conventional point-based backbones degrade sharply.

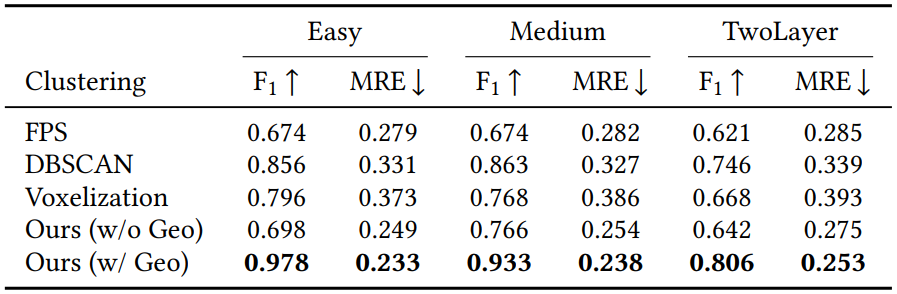

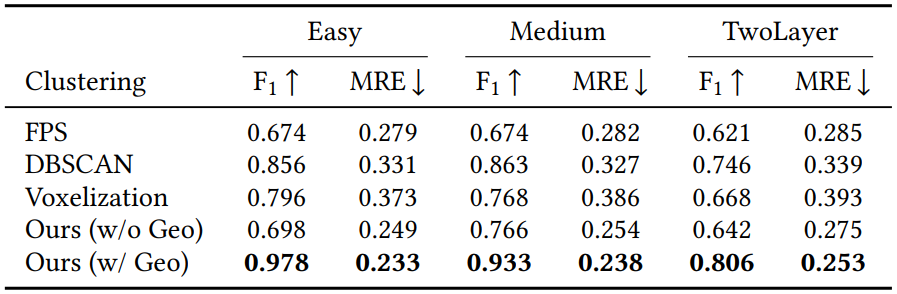

Difficulty-wise comparison (Easy / Medium / TwoLayer)

We also test robustness against increasing forest complexity, from simple plots to layered canopies with stronger occlusion.

– Our method remains best across all difficulty tiers:

Easy: F1 0.978, MRE 0.233 m

Medium: F1 0.933, MRE 0.238 m

TwoLayer: F1 0.806, MRE 0.253 m

– Baselines improve slightly on Easy/Medium but still lag far behind, and they struggle most in TwoLayer scenes where occlusion and clutter are severe.

Table 2. Difficulty-wise comparison (Reproduced from Table 3 in [1]).

Overall, the key takeaway is that the proposed topology-guided representation degrades gracefully as forests become harder, instead of collapsing.

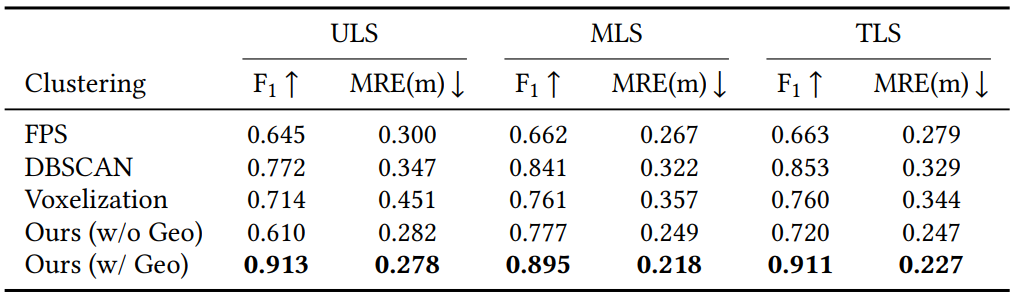

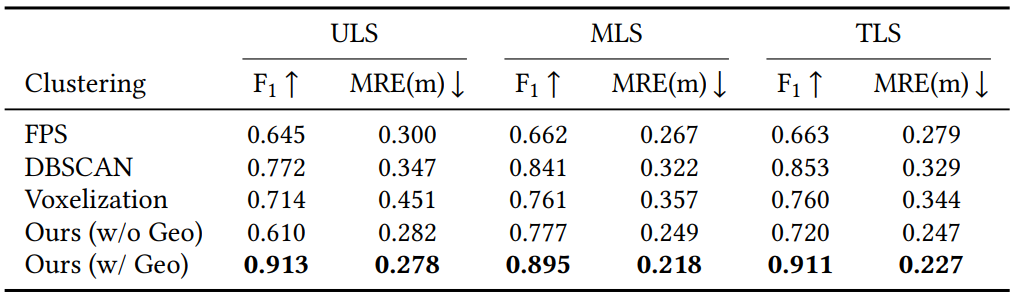

Ablation on clustering representation (platform-wise)

This ablation asks: before the detector head, how should we form “anchors” and local neighborhoods?

We compare four alternatives:

FPS [6] anchors (sample points, attach kNN members)

DBSCAN [7] clusters (density-based clustering)

Voxelization [8] anchors (grid cell centroids)

Ours (topology-guided clusters via DMT), with/without simple geometric cues

Results show:

– Our full model (Ours w/ Geo) is best on every platform:

ULS 0.913 / 0.278 m, MLS 0.895 / 0.218 m, TLS 0.911 / 0.227 m

– Removing geometric cues (Ours w/o Geo) drops F1 noticeably (especially on ULS), showing that cheap geometry statistics add strong signal at very low cost.

Table 3. Ablation on clustering representation (Reproduced from Table 4 in [1]).

Topology-guided clustering + lightweight geometry cues gives the most stable anchors for cross-platform tree detection.

Ablation on clustering representation (difficulty-wise)

The same clustering ablation is repeated under different forest complexity.

– Again, Ours (w/ Geo) is the best in all tiers:

Easy 0.978 / 0.233 m, Medium 0.933 / 0.238 m, TwoLayer 0.806 / 0.253 m

– The gap becomes largest in TwoLayer, which is exactly where density drift and occlusion are strongest.

Table 4. Ablation on difficulty tier (Reproduced from Table 5 in [1]).

This supports the main claim:

topology-based clusters preserve “tree-like continuity” better than purely density-based or grid-based neighborhoods.

Key Outcomes & Recommendations

Key outcomes

Cross-platform tree-center detection is feasible and reliable. The proposed detector consistently finds tree centers across TLS, MLS, and ULS, even though these platforms observe forests with very different viewpoints and sampling densities.

Topology-guided anchors improve robustness. By using Discrete Morse Theory (DMT) to form structure-based clusters and anchors, the model relies less on density-dependent neighborhoods, which helps under occlusion and density drift.

High detection reliability with accurate localization. On the synthetic Boreal3D dataset, the method achieves F1 around 0.90+ across platforms and keeps the average localization error around 0.2–0.3 m, clearly outperforming point-based baselines in both detection reliability and localization accuracy.

Recommendations

Leverage synthetic data for scalable training. High-quality synthetic forests enable large-scale training and controlled platform variations, while real data collection and labeling are costly. A practical strategy is to pretrain extensively in simulation and use limited real data for adaptation, making this a strong direction for sim-to-real deployment.

Extend the framework to tree metrics, not only locations. Once stable tree centers are obtained, the same representation can be extended to predict additional attributes such as tree height and diameter at breast height, ideally with confidence and uncertainty estimates to support practical decision-making.

Afterword

On paper, this project is about detecting tree centers from forest point clouds. In practice, the hardest part was not writing a new module; it was dealing with how unstable point clouds can be in the real world. Occlusion removes exactly the parts you want to rely on, and different LiDAR platforms observe the same forest with different viewpoints and sampling densities. That means the local neighborhoods that many standard methods depend on can change dramatically from one platform to another. Early on, I tried to “out-learn” these issues with stronger networks and tuning, but I gradually realized a simple lesson: if the representation shifts, the model spends its capacity chasing the shift instead of finding trees.

Most of my time went into careful verification: ground normalization choices that affect height consistency, slice settings that control how much structure each layer carries, and the alignment between topological anchors and the neural features learned later. Debugging often felt “unexciting,” but it was essential. When performance dropped on a different platform, the reason was rarely “the model failed” in a simple way; it was usually that one step amplified a platform gap. When something broke, I traced it back to one assumption, changed that piece, and reran the same checks across the platforms.

During my research visit to the University of Maryland, College Park, I had the chance to present my work in seminars, discuss ideas with researchers from different backgrounds, and get direct feedback on both the technical content and how I communicated it. Those discussions made something very clear: if I start by saying “topology” or “DMT,” many listeners naturally treat it as jargon. But if I first explain the real pain point, occlusion and density drift making tree detection unreliable at scale, then place DMT as a tool for extracting stable structure, the story becomes easy to follow. Working across time zones and maintaining frequent meetings also taught me to simplify explanations without losing the core logic: fewer terms, clearer motivation, and more emphasis on what each design choice actually solves.

In the end, my biggest takeaway is not a specific trick or component, but a research habit: respect uncertainty, slow down your assumptions, and verify aggressively. Good research is not only about producing a strong result; it is about building an explanation that remains credible when conditions change. This project reminded me that the work that lasts is usually the work that is stable, testable, and explainable, even when the data is imperfect.

Acknowledgement

I am sincerely grateful to the colleagues and hosts at the University of Maryland, College Park, for the opportunity to present, discuss, and learn through this visit. I also appreciate the support from the DSEP program and the MDA Education Promotion Office at the University of Tsukuba, whose administrative and financial assistance made the research visit and related activities possible. Finally, I would like to thank my advisor and collaborators for their guidance and feedback throughout the project.

References

[1] Yiliu Tan, Xin Yang, Xin Xu, Jingyi Zhang, Yunjian Cao, and Maiko Shigeno. 2026. Graph-Topological Deep Detector for Cross-Platform Forest Point Clouds. In Proceedings of the 2025 8th International Conference on Computational Intelligence and Intelligent Systems (CIIS ’25). Association for Computing Machinery, New York, NY, USA, 15–22. https://doi.org/10.1145/3787256.3787259

[2] Jing Liu, Duanchu Wang, Haoran Gong, Chongyu Wang, Jihua Zhu, and Di Wang. 2025. Advancing the Understanding of Fine-Grained 3D Forest Structures using Digital Cousins and Simulation-to-Reality: Methods and Datasets. arXiv preprint arXiv:2501.03637 (2025).

[3] Meng-Hao Guo, Jun-Xiong Cai, Zheng-Ning Liu, Tai-Jiang Mu, Ralph R. Martin, and Shi-Min Hu. 2021. PCT: Point Cloud Transformer. Computational Visual Media 7, 2 (2021), 187–199.

[4] Yue Wang, Yongbin Sun, Ziwei Liu, Sanjay E. Sarma, Michael M. Bronstein, and Justin M. Solomon. 2019. Dynamic Graph CNN for Learning on Point Clouds. ACM Transactions on Graphics 38, 5 (2019), 1–12.

[5] Charles Ruizhongtai Qi, Li Yi, Hao Su, and Leonidas J. Guibas. 2017. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. In Advances in Neural Information Processing Systems, Vol. 30. 5099–5108.

[6] Yu Wu, Shengchao Zhong, Yajing Ma, Yu Zhang, and Mingshun Liu. 2021. Classification of Typical Tree Species in Laser Point Cloud Based on Deep Learning. Remote Sensing 13, 23 (2021), 4750.

[7] Hongping Fu, Hao Li, Yanqi Dong, Fu Xu, and Feixiang Chen. 2022. Segmenting Individual Tree from TLS Point Clouds Using Improved DBSCAN. Forests 13, 4 (2022), 566.

[8] Shengjun Zhang, Xin Fei, and Yueqi Duan. 2024. GeoAuxNet: Towards Universal 3D Representation Learning for Multi-Sensor Point Clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 20019–20028.